American Art History

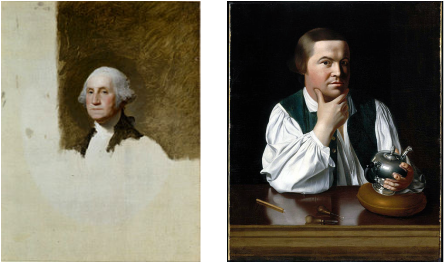

Americans started using art in the eighteenth century to capture images of important American figures who helped us shape our nation.

In the nineteenth century American artists started taking inspiration from what they saw in the world around them and events that have helped us develop who we are as a nation.

In the early 1900s artists began to do away with the old traditions and let their view of America become the bases for their art. They depicted what we call "American realism".

Throughout the 20th century art moved forward with Southwestern art reflecting the peoples who originally lived in the Southwest, the Harlem Renaissance depicting portraying more African Americans in art, and the New Deal creating art programs during the depression. Abstract Expressionism followed showing the feelings of the artists and not what they appeared to be.

American art has always had a sense of realism even through modern times. Artists reuse everyday or discarded items to make their art.